Let’s be honest. For a long time, the evaluation of teaching felt like a formal report card—something done to you, not with you. But in the world of online learning, that old-school thinking is a recipe for failure.

Today, smart evaluation is your secret weapon. It’s the process of systematically gathering and analysing information about your teaching so you can actually improve it and make sure your students are succeeding.

Why Modern Teaching Evaluation Is a Game Changer

Think of teaching evaluation less as a critique and more as a compass for your online academy. It gives you a clear direction on where to go next, replacing guesswork with genuine evidence of what works.

This shift from judgement to improvement is everything. For online coaches, creators, and tutors, understanding what truly connects with learners is what separates a good course from a great one. It helps you pinpoint exactly which modules are hitting the mark, which concepts are causing confusion, and where your teaching style is having the biggest impact.

Moving from Guesswork to Growth

When you get systematic about evaluating your teaching, you can:

Find Your Strengths (and Weaknesses): Discover which parts of your course are gold and which areas need a rethink. Are students consistently dropping off at a specific video? Are they acing one quiz but bombing another? This is the data you need.

Boost Student Engagement: By actually listening to feedback and observing student behaviour, you can create far more compelling content that keeps learners hooked from start to finish.

Improve Learning Outcomes: At the end of the day, it's all about student success. Evaluation makes sure your teaching methods are genuinely helping learners pick up new skills and knowledge.

This isn't just a challenge for solo creators; it's a massive focus for large-scale education systems, too. For instance, India’s system oversees 14.71 lakh schools and over one crore teachers, making robust evaluation a critical tool for maintaining quality. High student-teacher ratios make a structured evaluation of teaching vital for spotting performance gaps and improving outcomes. You can explore more about India’s education sector trends and what works.

Ultimately, teaching evaluation is about turning insights into action. It's a continuous cycle of listening, analysing, and refining your approach to deliver an exceptional learning experience that drives results and sets your academy apart from the rest.

Formative vs Summative Evaluation Explained

When we talk about evaluating our teaching, it's easy to get lost in jargon. But it really boils down to two core ideas: formative and summative evaluation. Getting the hang of the difference isn't just for academics—it's about knowing when to tweak your course on the fly versus when to step back and measure its overall success.

Here’s a simple way to think about it.

Imagine you're a chef cooking a soup. You taste it while it’s simmering, adding a pinch of salt here or a bit more broth there to get it just right. That’s formative evaluation. It’s done during the process, it’s diagnostic, and it’s all about making immediate improvements.

Now, picture a food critic tasting the final, plated dish and writing a review for a magazine. That’s summative evaluation. It’s the final judgement on the overall quality after everything is done.

Both are critical for a great outcome, but they serve completely different purposes at different times.

Formative Evaluation: Your In-Progress Checkup

Formative evaluation is the feedback loop you run while your course is in progress. Think of it as taking small, frequent snapshots of student understanding so you can make mid-course corrections. The goal isn’t to give your teaching a final grade, but to constantly refine the learning experience as it’s happening.

This is all about progress and development. For online course creators, this is your most powerful tool for staying agile and responding to what your students actually need, right when they need it.

Formative assessment isn't about grading; it's about guiding. It answers the question, "How can we make this learning experience better right now?" This approach helps you spot confusion before it snowballs into frustration.

A few classic formative methods you can use are:

Quick polls during a live session to check if everyone’s on the same page.

“Minute papers” where students spend sixty seconds summarising the biggest takeaway.

Monitoring discussion forums to see which questions keep popping up.

Analysing early quiz results to pinpoint concepts that are tripping students up.

Summative Evaluation: The Final Verdict

In contrast, summative evaluation happens after all the teaching is done. It’s the final "summary" of how effective the course was. This is where things like end-of-course surveys, final exam scores, and overall completion rates come in. You’re not using this data to make changes to the current cohort; you’re using it to make bigger, strategic decisions about the future.

Summative data helps you answer the big-picture questions:

Did students actually achieve the learning outcomes I set out?

Overall, how satisfied were learners with the course?

Should I run this course again? If so, what major changes should I make?

When you combine both, you get a powerful, complete picture of your teaching effectiveness. Formative feedback lets you steer the ship day-to-day, while summative data helps you chart the course for your next big voyage.

Formative vs Summative Evaluation at a Glance

This quick comparison will help you decide which evaluation approach fits your needs at different stages of your course.

Attribute | Formative Evaluation (During the Course) | Summative Evaluation (After the Course) |

Purpose | To improve and adjust teaching while it's happening. | To judge the overall effectiveness of the course. |

Timing | Ongoing, frequent, and in real-time. | At the end of a unit, module, or entire course. |

Feedback Goal | Diagnostic and developmental. Identifies areas for immediate improvement. | Judgemental. Measures final achievement and success. |

Stakes | Low-stakes. Usually doesn't contribute to a final grade. | High-stakes. Often tied to final grades or certification. |

Example Methods | Quick polls, discussion forum monitoring, minute papers, early quizzes. | Final exams, end-of-course surveys, completion rates, capstone projects. |

Key Question | "How can we make this better right now?" | "How well did this work overall?" |

Ultimately, a smart evaluation plan doesn't choose one over the other. It weaves them together to create a cycle of continuous improvement.

Essential Frameworks and Tools for Evaluation

Moving from theory to practice in teaching evaluation means you need a well-rounded toolkit. Relying on a single source of data is like trying to understand a complex film by watching just one scene—you’ll miss the full story. To get a genuine 360-degree view, you need to combine several instruments, each offering a unique perspective on your teaching.

This approach ensures your conclusions are balanced, fair, and, most importantly, actionable. Let’s break down the four cornerstones every online educator should have in their evaluation arsenal.

Student Feedback Surveys

The most direct way to understand the student experience? Just ask them. Student feedback surveys are invaluable for capturing how learners feel about your course content, your delivery style, and their overall satisfaction. They give you the human side of the story that raw numbers often miss.

But be careful. The quality of the feedback you get depends entirely on the quality of your questions. Vague or leading questions will only get you useless answers.

To gather genuinely helpful input, you should:

Ask specific questions: Instead of a generic "Did you like the video?", try "What was the most helpful concept in the Module 2 video, and what part was the least clear?"

Use a mix of question types: Combine multiple-choice questions for clean, quantitative data with open-ended questions that invite detailed, thoughtful feedback.

Time it right: Send out short, targeted surveys after key modules (formative) and save the more comprehensive one for the very end (summative).

For tools designed to gather student input, a dedicated Course Feedback template can help you collect these valuable insights.

Peer Reviews and Collaboration

Inviting a trusted colleague to review your course material or observe a live session brings a fresh, expert perspective. A peer can spot things you might miss after staring at the same content for weeks—like a confusing explanation or a missed opportunity to deepen a learning activity.

This isn’t about judgement; it's about collaborative growth. A fellow instructor can offer targeted advice from a teaching standpoint, focusing on pedagogy and content structure in a way students simply can't.

Learning Analytics

Modern learning platforms are goldmines of behavioural data. Learning analytics is all about tracking and interpreting the digital footprints your students leave behind to understand how they engage and where they're making progress.

This data-driven approach moves beyond what students say they do and shows you what they actually do. It reveals patterns in real-time, allowing you to make immediate, evidence-based adjustments to your teaching strategy.

For example, Skolasti’s built-in analytics can show you which videos are being re-watched most often, where students tend to drop off in a lesson, and which quiz questions are causing the most trouble. You can explore these features to see how data can directly inform your teaching improvements.

Student Assessments and Performance

At the end of the day, the most important measure of great teaching is whether students are actually learning. Assessments like quizzes, projects, and final exams provide concrete proof of whether students have mastered the material.

These summative tools are especially crucial in large-scale systems where consistency is key. The Economic Survey 2025–26 highlights ongoing improvements in India's education, but OECD data reveals a high student-teacher ratio of 27.2 in primary schools. This makes precise, well-designed assessments essential for measuring a teacher's impact when one-on-one attention is stretched thin.

By combining these four tools, you create a robust system for evaluating your teaching that is comprehensive, balanced, and ready to drive meaningful improvement.

Designing Your Step-by-Step Evaluation Plan

A solid evaluation framework is your personalised roadmap for continuous improvement. Without a clear plan, collecting data can feel chaotic and lead to a jumble of disconnected feedback. A structured approach, however, turns evaluation into a powerful and consistent engine for growth.

Think of it as building a house. You wouldn't start ordering bricks and windows without a blueprint. An evaluation plan is that blueprint, ensuring every piece of data you collect serves a specific purpose and contributes to a stronger, more effective course.

This simple, four-step process will guide you in creating an evaluation plan that fits your online academy perfectly.

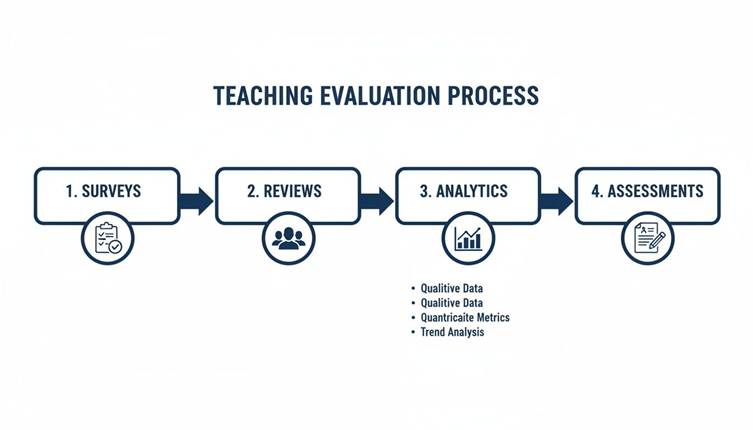

This infographic visualises the core tools used in a cyclical evaluation process, moving from direct feedback to data-driven insights.

The flow highlights how combining different instruments—from subjective student surveys to objective performance assessments—creates a comprehensive picture of teaching effectiveness.

Step 1: Define Clear Goals

Before you even think about tools, you need to start with your "why." What do you actually want to measure? Vague goals like "improve the course" are just wishes; they aren't actionable.

Get specific. Are you trying to boost student completion rates by 15%? Do you want to slash the number of support tickets about Module 3? Or maybe your aim is to see a measurable jump in student confidence on a key skill.

Your goals will dictate everything that follows. They act as a filter, helping you focus only on the data that truly matters for your evaluation of teaching.

Step 2: Select the Right Instruments

With clear goals in hand, you can now pick the right tools for the job. You don't need to use every method for every goal. The smart move is to mix and match them for a balanced view.

To measure student satisfaction: An end-of-course survey is your go-to.

To diagnose confusion on a specific topic: Use learning analytics to spot where students drop off, then follow up with a quick, targeted poll.

To validate learning outcomes: Nothing beats quizzes and final projects.

A crucial part of this is knowing how to write effective survey questions that get you genuinely insightful feedback, not just vague compliments.

A common mistake is over-relying on a single source of data, like student satisfaction surveys. While important, they don’t tell you if students are actually learning. Combining satisfaction ratings with performance data provides a much more reliable picture.

Step 3: Establish a Practical Timeline

Next up, map out when you’ll collect all this data. A good timeline isn't just a one-off event; it integrates both formative and summative touchpoints to create a continuous feedback loop.

Mid-Module Check-ins: Deploy quick polls or one-question surveys right after a tough lesson.

End-of-Module Feedback: Use short surveys to get thoughts while the content is still fresh in your students' minds.

End-of-Course Survey: Launch a comprehensive survey to capture the big picture and overall impressions.

Ongoing Analytics Review: Block out time every week or two just to review engagement data.

Step 4: Communicate the Process

Finally, and this is a big one, be transparent with your learners. Let them know why you're collecting feedback and exactly how their input will be used to make the course better for everyone.

When students understand their voice matters, they’re far more likely to give you thoughtful, constructive responses. This simple act builds trust and fosters a collaborative learning environment.

Putting Your Evaluation Plan into Action with Skolasti

A great plan is your blueprint, but technology is what actually builds the house. This is where a platform like Skolasti comes in, bridging the gap between your evaluation strategy and actually getting it done. It gives you the tools to automate how you collect and analyse data, turning the evaluation of teaching from a manual headache into a smooth, integrated part of your process.

Instead of juggling different apps for surveys, quizzes, and analytics, you can run your entire plan from a single dashboard. That integration is the key. It makes both formative and summative evaluation a natural part of how you teach, not just another task tacked on at the end.

Leverage Built-In Assessments for Summative Data

Your evaluation plan needs hard numbers to measure final learning outcomes. That's what summative data is for, and Skolasti’s built-in assessment tools are designed to deliver it. You can build quizzes, assignments, and final exams right inside your course modules.

This gives you concrete evidence of what your students have actually mastered. The best part? The results automatically flow into your analytics dashboard, giving you a clear, quantitative measure of teaching effectiveness without ever leaving the platform.

This approach fits perfectly with the larger shift in education toward data-driven teaching. For instance, in 2025, schools in India began to prioritise competency-based learning over rote memorisation, using continuous assessments to guide students intentionally. This is exactly what Skolasti empowers creators to do—using data to understand what's working and scale up high-quality learning. You can discover more insights about transforming education in India on economictimes.indiatimes.com.

Uncover Formative Insights with the Analytics Dashboard

Formative evaluation is all about making smart adjustments while the course is still running, and for that, you need real-time data. Think of the Skolasti analytics dashboard as your command centre for these ongoing insights.

It gives you a clean visual breakdown of student engagement, progress, and performance across your entire course.

As you can see, you can track key metrics like completion rates, assessment scores, and engagement levels at a glance. When you spot a trend—like a specific lesson where students are dropping off—you can step in right away to fix it and improve the learning experience.

Deploy the AI Teaching Assistant for Deeper Understanding

Sometimes, the most honest formative feedback comes directly from the questions your students are asking. Skolasti’s AI Teaching Assistant does more than just offer 24/7 support; it’s also an incredibly powerful evaluation tool.

The AI logs common questions and pinpoints topics where learners consistently get stuck. This gives you a direct line into your students' minds, showing you exactly where your course content might need a little more clarity or an extra resource. It’s like having a focus group running for you around the clock.

Key Takeaway: Using an integrated platform automates the heavy lifting of evaluation. You can collect summative data from assessments, monitor formative engagement with analytics, and understand student pain points through the AI—all in one place.

Finally, no evaluation plan is complete without protecting your hard work. Skolasti’s robust security, including DRM and content watermarking, makes sure that your evaluation materials, assessments, and course content stay safe. This protection lets you focus on what really matters: teaching and improving.

If you're ready to see how these features can plug into your own evaluation plan, we'd be happy to provide a personalised tour of the Skolasti platform.

Turning Data into Meaningful Course Improvements

Gathering data is just the beginning. The real value in any evaluation of teaching lies in turning those numbers and comments into tangible action. Raw data is just an ingredient; the magic happens when you interpret it to create a recipe for a better course.

Think of yourself as a detective looking for clues. Your goal is to spot the patterns that reveal exactly where your course shines and where it needs a bit more work. By looking at both the hard numbers and the student stories together, you build a complete picture of the learning experience.

Analysing Your Quantitative Data

Start with the numbers, as they often point you to specific areas to investigate further. This is your objective look at what learners are actually doing.

Look for trends in:

Engagement Metrics: Where are students dropping off? A sudden dip in completion rates for Module 3, for instance, is a major red flag that something isn’t working.

Assessment Scores: Are students consistently struggling with questions on a particular topic? Low average scores on a specific quiz are a clear signal of a potential gap in your teaching.

Content Interaction: Which videos get re-watched the most? This could mean they’re incredibly valuable, or it could mean they’re just plain confusing. Either way, it’s worth a closer look.

Decoding Qualitative Feedback

Next, dive into the "why" behind those numbers with feedback from surveys, forum posts, or even your AI Teaching Assistant. If your AI TA constantly fields questions about a specific concept, that’s your cue to add more resources or clarify your explanation.

Start categorising this feedback to spot recurring themes. Are multiple students mentioning that the pacing feels too fast? Or that a particular example just didn't land? These patterns are your roadmap for making impactful changes.

The most powerful insights often come from connecting the dots. For example, if your analytics show 70% of students drop off in Module 3 and student comments reveal the project instructions are unclear, you’ve found a clear, actionable problem to solve.

Ultimately, this process turns evaluation from a simple measurement into a cycle of continuous improvement. For more ideas on enhancing your course based on what you find, check out our collection of effective online course tips.

Frequently Asked Questions About Teaching Evaluation

Even the most seasoned course creators run into practical questions when they start digging into teaching evaluation. Let's tackle some of the most common ones we hear from academy owners and online instructors.

How Often Should I Evaluate My Teaching?

The best evaluation isn't a one-off event; it's a continuous habit.

Think of it this way: for formative feedback, you want small, frequent check-ins. A quick poll after a tricky lesson or a weekly glance at your analytics dashboard works wonders. For summative evaluation, save the big-picture questions for end-of-module or end-of-course surveys.

What If I Receive Negative Feedback?

First things first: don't panic. Negative feedback isn’t a personal attack—it's a massive opportunity to get better.

The temptation is to get defensive, but resist it. Instead, hunt for patterns. If one student says your videos are too long, that’s just one data point. But if ten students say the same thing, you've just uncovered a clear path to improvement.

Key Insight: The feedback is about a specific part of your course, not about you as a person. Separate the criticism from your identity and use it as a compass to make targeted changes that help every future student.

Can I Start Small with My Evaluation?

Absolutely. In fact, you should. You don't need to build a complex, multi-layered system on day one.

A fantastic starting point for any evaluation of teaching is to just add one simple tool, like an end-of-course survey. Once you get into the rhythm of analysing that data and making changes, you can slowly layer in other methods like learning analytics or peer reviews. The most important thing is simply to start.

Ready to build a powerful evaluation system without all the guesswork? Skolasti brings analytics, assessments, and an AI Teaching Assistant together on one platform. You get the insights you need to improve your courses and help your students win.

Explore how Skolasti can sharpen your teaching at https://www.skolasti.com.